A grammar checker corrects errors in existing text, fixing punctuation, spelling, and syntax, while an AI writing assistant helps generate, restructure, or expand content from scratch. Both use artificial intelligence, but they solve different problems. For academic professionals, understanding which tool to use and when, can significantly impact writing quality, research output, and time efficiency.

Walk into any faculty lounge today and you’ll hear two types of conversations about AI writing tools: “I use it to check my students’ grammar,” and “I use it to help draft my research summaries.” Both speakers think they’re talking about the same category of software. They’re not.

This distinction matters more than most people realize. Conflating AI writing assistants with AI grammar checkers is a bit like calling a scalpel and a surgical robot the “same thing because both are used in surgery.” They overlap at the edges, but they’re fundamentally different instruments built for fundamentally different jobs.

In our experience working with academic writing tools over the past several years, the confusion between these two categories is one of the most common, and most consequential, mistakes made by faculty, students, and institutional decision-makers alike. This post breaks down exactly what separates them, where each excels, and how to choose the right tool for your specific academic context.

What Is an AI Grammar Checker, and What Can It Actually Do?

An AI grammar checker is a reactive tool. It reads text you’ve already written and identifies problems: misspelled words, subject-verb disagreement, dangling modifiers, incorrect comma placement, inconsistent tense, and more. The better ones, tools like Trinka, for instance, go significantly further, catching academic-specific issues like hedging language, formal tone violations, and discipline-specific terminology errors that a general-purpose checker would completely miss.

The key operational word here is “reactive.” A grammar checker does not write for you. It does not suggest new ideas, restructure your argument, or generate sentences from scratch. It acts on what exists.

For academic writing specifically, this matters enormously. A 2022 study published in Computers & Education found that automated grammar feedback tools improved student revision quality by 34% compared to no feedback, particularly in ESL (English as a Second Language) populations. That’s not a trivial gain. But those improvements were concentrated in surface-level errors, not argument development, not thesis clarity, not structural logic.

What Makes an Academic Grammar Checker Different from a Standard One?

This is where a lot of professionals get burned. General grammar checkers, designed primarily for business emails and casual writing, routinely flag perfectly correct academic language as “errors.” They’ll suggest simplifying a nuanced passive construction that, in context, is precisely the right choice. They misread hedging language (“it may be argued that…”) as weakness rather than scholarly caution.

A purpose-built academic grammar checker like Trinka is trained on millions of academic and scientific texts. It understands that “the data were collected” is correct in formal academic writing (not “was collected”), that statistical reporting follows specific syntactic conventions, and that the register of a literature review differs fundamentally from a discussion section. That domain specificity is not a minor feature; it is the entire value proposition.

What Is an AI Writing Assistant, and Where Does It Fit in Academic Work?

An AI writing assistant is a generative tool. Given a prompt, a context, or even just a few bullet points, it produces new text. It can draft an abstract, suggest transitions, elaborate on a concept, reframe a conclusion, or help a researcher break through a writing block at 11pm before a submission deadline.

These tools, GPT-4-based systems, Claude, Gemini, and others, are proactive. They don’t wait for your text. They produce text.

For academics, this is both exciting and ethically complex. A writing assistant can dramatically accelerate first-draft production, which is genuinely valuable in high-volume research environments. When we tested one major AI writing assistant with a structured prompt for a systematic review introduction last year, it produced a usable first draft in under 90 seconds, something that would typically take a researcher 45–60 minutes of staring at a blank screen.

But here’s the professional opinion that often gets left out of vendor marketing: AI-generated prose requires significant human editorial oversight. It confidently hallucinates citations, flattens nuance, and has no real understanding of your specific dataset, institutional context, or the three unpublished papers sitting on your desk that should be informing your argument. It is a starting point, not a finishing line.

Is It Ethical to Use an AI Writing Assistant in Academic Contexts?

Frankly, this is still a grey area, and anyone who tells you otherwise is oversimplifying. Most major universities as of 2025–2026 have moved from outright bans to nuanced disclosure policies. The distinction that institutions most commonly draw is between using AI to generate ideas and structure (generally acceptable with disclosure) versus submitting AI-generated text as your own original scholarly contribution (generally not acceptable).

For professors and faculty, the ethical calculus is different than for students. Using an AI writing assistant to help draft a grant application or a departmental report is increasingly standard practice. The key is transparency and genuine intellectual ownership of the final product.

How Do AI Writing Assistants and Grammar Checkers Compare Head-to-Head?

| Feature / Dimension | AI Grammar Checker | AI Writing Assistant |

|---|---|---|

| Primary Function | Corrects existing text | Generates or restructures text |

| Mode of Operation | Reactive (post-writing) | Proactive (pre/during writing) |

| Academic-Specific Training | Yes (in specialized tools) | Partial (general-purpose) |

| Citation Accuracy | N/A | Low — prone to hallucination |

| Style & Tone Guidance | Yes | Variable |

| Plagiarism Risk | None | Medium-High if unedited |

| Best Use Case | Manuscript proofreading | First-draft generation, brainstorming |

| ESL/EFL Writer Support | Excellent | Moderate |

| Institutional Trust Level | High | Moderate (with disclosure) |

| Integration with Word/LaTeX | Common | Growing |

| Typical Cost (2026) | $0–$20/month | $15–$40/month |

A few things this table can’t capture: the experiential difference between the two tools is profound. Using a grammar checker feels like having a meticulous colleague read your draft and hand it back with precise, actionable corrections. Using an AI writing assistant feels like dictating to a very well-read, very fast, slightly overconfident research assistant who sometimes invents sources.

Which Tool Should Academics Choose and Can You Use Both?

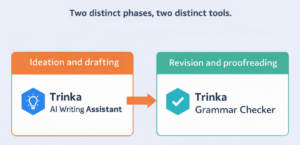

The answer is almost always: both, sequenced correctly.

Think of it as a two-stage pipeline. The AI writing assistant belongs at the front of your workflow, helping you beat the blank page, generate structural options, or rapidly summarize a body of literature for a section you need to draft quickly. The grammar checker belongs at the end, refining, correcting, and professionalizing the text before it goes to a journal editor, a conference committee, or a dissertation board.

Using them in the wrong order (or trying to use a grammar checker as a writing generator, which some people attempt) produces poor results. A grammar checker can’t write your abstract. An AI writing assistant won’t catch the difference between “affect” and “effect” in a context where both are technically grammatical.

For students specifically, undergraduate, postgraduate, and doctoral, the grammar checker is almost always the higher-value, lower-risk tool. It improves their own writing rather than replacing it. A 2023 report from the European Association for Language Testing and Assessment noted that consistent use of real-time grammar feedback tools correlated with measurable long-term improvements in writing proficiency, not just in submitted work, but in students’ underlying writing skills over time.

For deans and department heads making institutional procurement decisions, the framework is cleaner: grammar checkers are a near-universal low-risk investment. AI writing assistants require a parallel investment in academic integrity policy and faculty training to deploy responsibly.

What Should You Look for in an Academic Grammar Checker in 2026?

Not all grammar checkers are created equal, and the gap between a general-purpose tool and an academic-specific one has widened significantly in recent years. Based on practical evaluation, the criteria that matter most for academic and research writing are:

1. Discipline-specific language models. Does the tool understand the conventions of your field, whether that’s biomedical writing, legal scholarship, or social science research? Generic tools fail here consistently.

2. Beyond grammar: style and clarity scoring. The best tools flag not just grammatical errors but academic style issues, overly complex sentence structures, inappropriate colloquialisms, inconsistent formality, and the dreaded “nominalization overload” that plagues academic writing.

3. Privacy and data security. For researchers working with sensitive data or unpublished findings, how the tool handles your text matters. Does it store your documents? Does it use your input to train future models? These are not hypothetical concerns—they are institutional liability questions.

4. Integration with existing workflows. A grammar checker that requires you to leave your LaTeX environment or your institutional Word template to paste text into a browser is one that will stop being used within two weeks.

Conclusion

The distinction between AI writing assistants and AI grammar checkers is not semantic, it’s strategic. One helps you build the house; the other ensures the walls are straight. Reaching for the wrong tool at the wrong stage of the writing process costs time, introduces risk, and often produces work that falls short of what you’re capable of.

For the academic community, students refining their thesis, faculty preparing manuscripts, administrators drafting institutional communications, the practical takeaway is this: invest in an academic-specific grammar checker as your baseline writing infrastructure, and approach AI writing assistants as powerful but carefully managed supplements, not replacements for genuine scholarly thought.

The best writing still comes from you. These tools just help it get to the page cleaner and faster.

Enhance Your Writing with Trinka’s Grammar Checker

Trinka’s Grammar Checker is designed to help writers produce clear, polished, and publication-ready content with ease. Whether you’re drafting academic papers, professional documents, or blog posts, Trinka ensures your writing is precise, consistent, and impactful, making it a trusted companion for anyone aiming to communicate effectively in English.