AI disclosure policies fail when students see them as traps rather than tools. Research shows that non-disclosure is not usually dishonesty. It is a rational response to vague rules, inconsistent enforcement, and the fear that admitting AI use will trigger an accusation. Policies that build transparency into the writing process from the start, rather than asking students to self-report at the end, get dramatically better results.

The policy exists. Students are not following it.

Most universities now have some form of AI use policy. The problem is that having a policy is not the same as it working.

A 2023 survey of 50 leading global universities found that 57% had guidelines in place asking students to acknowledge or cite AI use. Yet a 2024 study at King’s Business School, King’s College London found that 74% of students failed to complete a mandatory AI declaration on their coursework cover sheet, even though every other field on the same form was routinely filled in. The declaration was the one-part students skipped.

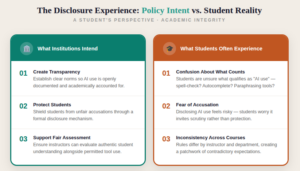

The researchers identified four reasons behind this: fear of academic consequences, unclear guidelines, inconsistent enforcement across courses, and peer influence. None of those are character flaws. All of them are predictable responses to a policy environment that asks for honesty without first building the conditions for it.

Why students stay silent even when AI use is permitted

This is the part that surprises many faculty. Students are not just hiding unauthorized AI use. Many are hiding use that was probably fine, because they cannot tell the difference.

At the 2025 EDUCAUSE Annual Conference, researchers from Lamar University and Texas A&M described students juggling conflicting class-level and assignment-level AI policies with no reliable way to know when they were in the clear. Ashley Dockens, Associate Provost of Digital Learning at Lamar University, noted that students are being held to a standard they were never clearly taught, and that most misuse is not malicious. It is a response to confusion and pressure.

Put yourself in that position. You use AI to brainstorm ideas. You are not sure if that counts as a violation. You know detection tools make errors. Staying silent feels like the safer choice. That calculation is rational. It is not dishonest. And it will keep repeating as long as disclosure carries more risk than silence.

The King’s Business School study found that students also feared their instructor would think less of them, assume laziness, or grade them differently if they disclosed AI use. One student said directly: “Who does the marking and how the person positions it can lead to a lot of bias.” That fear is grounded in real experience, not paranoia.

What “clear policy” actually means in practice

The most common advice institutions give faculty is to write clear AI guidelines in their syllabus. This is necessary but not sufficient. Clarity is not just about what the words say. It is about whether students know, before they begin an assignment, exactly what is allowed and what is not, in plain terms they can act on.

A 2025 study across 124 undergraduates and seven faculty at two U.S. R1 universities found that while students recognized classroom-level policies, institutional guidelines felt unclear and inconsistent. A global survey of 40 leading universities found that even where institutional leaders expressed positive attitudes toward AI, formal policies often lacked clarity, coherence, or actionable detail.

Clarity, as students experience it, means knowing the answer to these specific questions before they start writing: Can I use AI to brainstorm? Can I use it to edit grammar? Can I use it to research? If I do, what do I need to tell you, and how? The more specifically a faculty member can answer those questions in writing, the less ambiguity students have to navigate on their own.

Some faculty are now using tiered disclosure frameworks. These give each assignment an explicit AI permission level, ranging from “no AI at any stage” to “AI permitted throughout with documentation.” The AI Assessment Scale developed by Leon Furze (2024) offers one such model. The point is not which framework you use. The point is that students should never have to guess.

The enforcement problem that clear language alone cannot solve

Even well-written policies run into a second problem: inconsistency across courses. When a student follows an AI policy in one class and gets flagged for the same behavior in another, the lesson they learn is not “understand the rules.” It is “the rules are arbitrary.”

A 2025 qualitative study of 58 students and 12 faculty at a research university in Hong Kong found that students described significant “invisible labor” in trying to decode ambiguous or inconsistent policies. That labor, the mental effort of figuring out what is actually allowed in each course, is exhausting and demoralizing. It also drives students toward silence, because silence at least avoids the risk of getting it wrong.

This is a structural problem that individual faculty cannot fully solve on their own. Departments need shared frameworks. Not identical policies, because disciplinary context matters, but shared principles about what disclosure means, how it is documented, and how it is treated when a student provides it honestly.

Shifting from self-report to process transparency

The most durable fix to the disclosure problem is not better wording. It is a different model for how transparency happens.

Self-reporting asks students to make a judgment about their own behavior after the fact and then document it honestly. That puts a significant cognitive and emotional burden on the student, in a context where honesty carries risk. It also produces data that is unverifiable. An institution cannot confirm what a student discloses, and it cannot confirm what a student does not disclose.

Process transparency works differently. Instead of asking students to report what they did, it makes the writing process itself reviewable. Revision sequences, thinking pauses, copy-paste events, and AI content interactions are captured as the document is built. The disclosure question becomes less fraught because the process is already documented. The student does not have to decide what to tell the instructor. The instructor can see what happened.

This also changes the psychological dynamic. Disclosure feels less like confessing and more like sharing. A student whose writing process is documented has evidence they can point to. A student who wrote authentically has nothing to fear from that record. A student who borrowed heavily from AI without engaging meaningfully with the content has a record that reflects that.

It is worth being honest about the limitations. Process documentation raises privacy questions that institutions need to address clearly. Students should be told in advance that their writing session is being recorded, what data is collected, who can access it, and how long it is kept. Treating it like any other LMS activity log, with informed consent and clear governance, is the standard to aim for. Without that transparency, the tool creates a different kind of anxiety.

What faculty can do this semester

Institutional change takes time. There are things faculty can do now, at the course level, that do not require a central mandate.

The first is to talk about disclosure rather than just writing it in the syllabus. Faculty who explains to students why the policy exists, what problem it is trying to solve, and why being honest actually protects them rather than exposing them, get better compliance. Students who understand the reasoning behind a rule are more likely to follow it, as research on academic motivation consistently shows.

The second is to frame disclosure as part of the learning, not separate from it. When students explain how they used AI in a reflective note attached to their assignment, it becomes an academic exercise rather than a compliance form. That framing changes how students approach it.

The third is to be explicit about what happens when a student discloses. If a faculty member says, “disclosing AI use will not automatically lower your grade,” and means it, students will start to believe it. If disclosures are consistently handled fairly, the culture around them will shift. That trust is built one course at a time.

Disclosure works when transparency is the safer choice

The most lasting change faculty can make is in how they frame the stakes. If students see disclosure as a way to “catch” them, they will stay silent. If they see it as a record that protects them and helps their instructor understand their work, they will be more open.

AI disclosure was never meant to be a mechanism for catching dishonesty. It is about making the learning process visible, creating a record of how students actually work, and building accountability into that process rather than bolting it on after the fact.

For institutions building out their authorship validation workflows, tools like Trinka’s DocuMark add a submission integrity layer that makes writing process claims reviewable rather than assumed. By documenting the writing session itself, they shift the conversation away from end-stage self-reporting and toward structured, verifiable process evidence, giving both students and faculty a more defensible basis for trust than disclosure forms alone can provide.

Enhance Your Writing with Trinka’s Grammar Checker

Trinka’s Grammar Checker is designed to help writers produce clear, polished, and publication-ready content with ease. Whether you’re drafting academic papers, professional documents, or blog posts, Trinka ensures your writing is precise, consistent, and impactful, making it a trusted companion for anyone aiming to communicate effectively in English.

Frequently Asked Questions

If a student discloses AI use honestly, are they more likely to be penalized?▼

That depends entirely on whether disclosure is permitted for that assignment. Faculty who clearly communicate that honest disclosure is safe, and then treat it that way consistently, reduce the fear that drives non-disclosure. Penalizing students for disclosing permitted use is the fastest way to destroy trust in a policy.

Can tiered AI permission levels work across large courses with many students? ▼

Yes. Tiered frameworks work best when the levels are simple (for example: no AI, AI for planning only, AI permitted with documentation) and communicated at the start of each assignment, not just in the syllabus. They reduce the cognitive load of decision-making for students.

What should a disclosure statement actually include? ▼

At minimum: which AI tool was used, what it was used for, and at what stage of the writing process. Some faculty also ask students to reflect on how the AI output was modified or evaluated. Short is better than complex. A two-sentence disclosure is more likely to get submitted than a lengthy form.

Does requiring disclosure create more work for faculty? ▼

Not necessarily. The goal is not to audit every disclosure in depth but to create a norm of transparency. Faculty who treat disclosed AI use as part of the assignment, asking students to reflect on it, spend less time investigating suspected misconduct and more time on actual teaching conversations.

How do you enforce a disclosure policy when you cannot verify it? ▼

Verification is exactly where self-report disclosure reaches its limit. Faculty can create conditions that make disclosure feel safe and expected. But without process-level evidence of the writing session, unverified self-report is the only mechanism available. This is the gap that process documentation tools are designed to close.